The content on this page has been converted from PDF to HTML format using an artificial intelligence (AI) tool as part of our ongoing efforts to improve accessibility and usability of our publications. Note:

- No human verification has been conducted of the converted content.

- While we strive for accuracy errors or omissions may exist.

- This content is provided for informational purposes only and should not be relied upon as a definitive or authoritative source.

- For the official and verified version of the publication, refer to the original PDF document.

If you identify any inaccuracies or have concerns about the content, please contact us at [email protected].

Generative and Agentic AI Guidance factsheet

The FRC supports innovation and the appropriate use of artificial intelligence (AI) to promote high quality audit, growth in the UK economy and the public interest. Generative AI (GenAI) and agentic AI tools have the potential to enhance audit quality but pose risks to quality too which need to be mitigated.

The FRC's Generative and Agentic AI guidance discusses audit quality risks associated with GenAI and agentic AI tools, alongside possible mitigations and principles for exercising professional judgement when seeking to obtain appropriate confidence in the outputs of these tools. The guidance provides illustrative examples that set out potential risks and mitigations for two use cases.

The guidance was developed as part of the FRC's technology working group, which includes representatives from eight audit firms. It has been developed, primarily, for those in central functions at audit firms that are responsible for the development of GenAI and agentic AI tools and supporting methodologies. It may contain content that is of interest to other stakeholders, including audit engagement teams, audit committees and third party technology providers, but these are not the primary audiences.

The guidance responds to the increasing use of GenAI and the development of agentic AI tools within audit firms. The guidance is intended to codify good practice that we have seen, promote audit quality, build confidence in the use of these technologies, and provide a conceptual foundation for future FRC work in this area. The firm and engagement partner retain full responsibility for audit quality, in accordance with ISQM (UK) 1 and ISA (UK) 220 respectively.

What is Generative and Agentic AI?

Generative AI (“GenAI”)

AI technologies that can generate content in response to prompts, using learned patterns from large training datasets. Large language models ("LLMs") are a specific form of genAI that consume, interpret, and generate text.

A GenAI tool could, for example, translate documents for the auditor to review.

Agentic AI

Artificial intelligence systems that can orchestrate and execute multiple components and/or tasks toward a goal, with some degree of autonomy. These systems typically include one or more LLMs that serve as both the orchestrating “brain” and as part of the execution layer, performing functions such as researching, reasoning, generating content or critiquing outputs.

An agentic AI tool can, with appropriate human oversight, perform a variety of tasks on an audit, including audit procedures, or elements of them, and ancillary activities.

What is currently being used?

Firms are currently at varying stages of adopting GenAI and agentic AI; some have chosen to develop in-house solutions, whilst others are opting for third party software. Current and potential uses of genAI and agentic AI tools include:

- Tools automatically matching supporting documents to samples

- Automated checks of financial statement mathematical accuracy

- Summarising meeting minutes

- Chat bots assisting audit teams with queries

- Translating documents to or from local languages

- Contract review tool evaluating contracts to classify or stratify them

- Agentic tools automating certain audit procedures

Risks and mitigations

The guidance sets out three categories of risk posed by the use of GenAI and agentic AI tools to audit quality. Other risks exist, such as cybersecurity and data risks, but the scope of this guidance is limited to risks to audit quality.

| Risk Type | Description |

|---|---|

| Risk of misuse of output | The risk that an output from an AI tool is misinterpreted or misunderstood by the user, possibly leading to an inappropriate conclusion in the audit. For example, if a tool designed for one task produces a plausible-looking output for another, that output may be inappropriately relied on. |

| Risk of deficient output | The risk that issues in system inputs or the design of the system result in a deficient output, which may then be relied upon during the audit. The risk can not be eliminated as LLMs have inherent limitations. For example, LLMs have a finite capacity which can lead to over simplification of outputs. |

| Risk of non-compliant methodology | The risk that the firm's methodology permits approaches, which include the use of AI tools, that fail to meet auditing standards. It may be challenging to compare the persuasiveness of outputs of an AI tool to evidence obtained from more traditional approaches, making it difficult to ascertain if the requirements of the auditing standards have been met. |

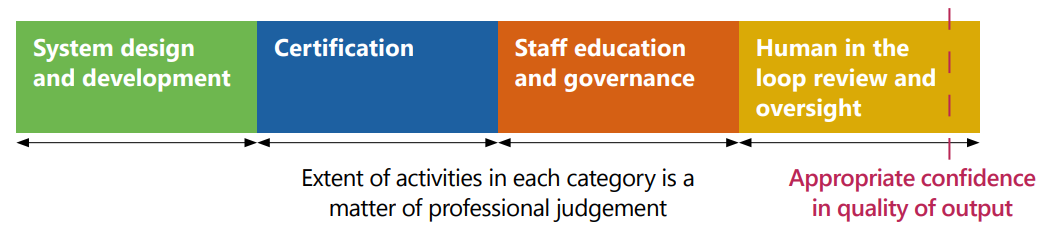

Audit quality risks can be mitigated in a number of ways. Performing some mitigating activities from each of the categories below may be appropriate for all tools and use cases, but the efficiency of each form of mitigation may vary significantly across tools and use cases, so the firm may exercise professional judgement to determine how to obtain appropriate confidence.

This diagram illustrates the relationship between different categories of mitigation activities and their impact on audit quality. It shows four categories of activities leading to appropriate confidence in quality of output:

- System design and development

- Certification

- Staff education and governance

- Human in the loop review and oversight

All these categories contribute to achieving "Appropriate confidence in quality of output". A caption notes: "Extent of activities in each category is a matter of professional judgement".

Financial Reporting Council

+44 (0)20 7492 2300 | www.frc.org.uk

Follow us on LinkedIn